One needs the right social environment, the right culture, the right brainworms.

>Brainworms > IQ, Algon33, February 2025

Invisible Infrastructure

I recently read Brainworms > IQ and I felt like resurrecting a project of mine that has been dormant since 2023. This project spun out of a broader reflection on the “invisible infrastructure” of human communities. Basically what Algon33 refers to as the brainworms.

I used to read and write a lot about the cultural infrastructure (language, shared beliefs, values), institutional infrastructure (laws, money, titles, bureaucracies), and technologies (systems of record, printing, software) that provide the scaffolding holding large groups together. I believe this triad of culture, institutions, and technology constitutes the cornerstone of the human exception by:

- Enhancing communication efficiency: they provide abstractions that reduce information entropy. This enables groups to collaborate on complex issues without having to lay the foundations of everyone’s alignment over and over again. Everyone either knows the basics, or doesn’t need to know them. Cultural artifacts provide axioms accepted at face value, while institutions and technology encode non-value-adding information into semi-automated processes (with a side effect of turning description into prescription).

- Reducing the cost of trustworthiness discovery: information about trustworthiness is either explicitly encoded (e.g., credit scores, titles) or an intermediary takes on part of the burden of trust. Without money and civil courts, all transactions would be barter with immediate delivery, limiting your dealings to a very small group of people you intimately trust.

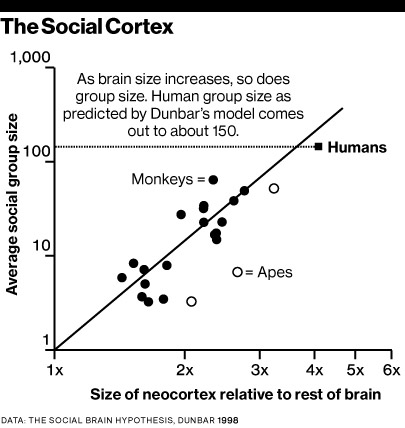

Physical barter perfectly exemplifies what humanity’s invisible infrastructure unlocks: an inability to scale trust caps the size of a group due to physical limits (the time and energy required to carry things around and negotiate from scratch). Physical constraints may not be the only issue; individual humans are also limited by their information processing capabilities. Robin Dunbar famously linked brain size to the number of stable social relationships one can maintain.

I don’t fully buy into Dunbar’s approach - and I don’t care if the right number is 15, 150, or 1500 - but I look at his regression as evidence that humanity managed to overcome something fundamental in the laws of Nature. Unlike other social animals, humans do not rely on purely biological or evolutionary mechanisms to scale local communities. Without our invisible infrastructure, there would be a hard upper bound to the size and geographical footprint of human communities, likely preventing modern civilization from emerging at all.

Today, the invisible infrastructure is what enables the organized chaos of public transport at rush hour. It is what makes packed nightclubs “fun” as opposed to “deadly”. It is what makes complex transactions involving distant sellers, buyers, lenders, and guarantors possible. All this is extraordinary.

AI Agents

When I first considered the societal impact of AI in the pre-ChatGPT era, I focused heavily on the information part of this invisible infrastructure. I was concerned about automation that increases efficiency in communication but reduces entropy so much that the system would collapse in on itself. It’s still something I think about, especially as cultural infrastructure from training data is distilled directly into model weights.

But with the emergence of LLM-based AI agents, I’ve also started to think about the trust bottleneck. Steerable LLMs felt like a paradigm shift in how we automate acting upon new information. Early on, I wanted to explore whether this shift could yield breakthroughs in social science; similar to how Axelrod’s iterated prisoner’s dilemma reframed pure game theory. Algon33 mentioned Aumann; there is also highly relevant 1959 research on cooperative n-person games. I tried building simulations at my humble level in 2023, but the tech just wasn’t good enough to test these hypotheses, so I quickly put the project on standby.

This project would make more sense today, as models are trained specifically for agentic use, reliable tool-calling, and vastly improved long-context handling. That said, there is still no clear line of sight regarding the full delegation of our transactional lives to AI assistants. The obstacles are numerous. While my project was paused, I kept reading the emerging multi-agent research, and I found that most papers fail to capture the sheer volume of minute, friction-heavy transactions that happen in the real world. They simulate simplistic environments where trust isn’t necessary. This assumption is fundamentally flawed. Trust is necessary, and scaling trust is hard.

Scaling Trust, Reputation, and Negotiation in Multi-Agent Systems

If we are moving toward a “Patchwork AGI”, where distinct agents act on behalf of human masters with specific, aligned interests (which are not necessarily public), these agents must navigate the real world just as humans do. I see three main friction points for agents:

- Transactions are negotiations: without falling into extreme “Art of the Deal” tropes, every transaction involves friction at the boundaries of an agent’s perimeter. In the real world, compromise is driven by the anticipation of future reciprocity. If agents cannot build this implicit trust, we are left with destructive game theory (the “Nuke them all” scenario).

- The cost of discovery: for humans, the cost of discovering a counterpart’s biases, risks, and hidden objectives is mitigated by cultural infrastructure, institutional markers, and community reputation (the “LinkedIn” or “social club” effect). For AI agents, this discovery process is currently infinitely costly. Every agent must “pay” to learn, meaning a malicious agent can repeatedly exploit the naivety of the system because reputations do not persist.

- Arbitrators, not judges: AI systems are well-suited to act as arbitrators - mechanisms for dispute resolution between private parties - but not as judges, which represent societal and moral authority. Multi-agent systems must reflect this distinction, relying on peer-to-peer resolution and reputation damage rather than divine algorithmic intervention. Otherwise, we’re just building a Leviathan, which is an inherently undesirable outcome.

Simulations with no guardrails lead to extreme outcomes where an agent is technically “right”, but its success comes at the expense of all other parties. The “paperclip maximizer” is a voluntarily provocative thought experiment, but Claude Opus 4.6’s behavior in Vending Bench seems like a realistic illustration of this issue.

Multi-agent research that proposes some kind of guardrails often rely on a flawed foundational assumption: they simulate environments where trust is either mathematically unnecessary (zero-trust) or centrally enforced.

- The Supreme Bureaucrat fallacy : some papers assume that a central authority instantly banishes bad actors. In the real world, the law cannot anticipate everything, and we do not go to a judge for every grievance. Between “perfect compliance” and “prison”, humans use an implicit infrastructure of intermediary step - reputation, social friction, and the choice to simply stop interacting. And on the other hand, hierarchies/organizations can force humans to work with someone who stabbed them in the back. Agents currently lack this infrastructure.

- The market approach, conflating “stock exchange” and market: stock exchanges are hyper-regulated markets built on complex ecosystems (e.g clearinghouses, networks operators, etc) designed to strip the “trust” dimension out of transactions. Counterparty risk and intermediary risk are virtually nonexistent because of the institutional infrastructure and technology. There is also a network effect which means that many buyers and sellers can meet, which reduces liquidity risk. In reality, stock exchanges represent a tiny fraction of economic and social activity. If we are naive about what a market actually is, we can only blame ourselves if our AI agents are exploited.

Simulating the Invisible Infrastructure

To reduce the cost of trustworthiness discovery for AI, we need to simulate this invisible institutional and technological infrastructure. To me, scaling trust for agents requires at least two components:

- A decentralized public registry: each agent needs a unique ID and a public profile to disclose objectives, achievements, or affiliations. Like a LinkedIn * for agents - with all the exaggeration that implies - it would allow agents to map their peers and decide whether to engage with an unknown entity.

- Persistent Memory & Retrieval: Agents must be able to log memories of their interactions with specific IDs, retrieve them to inform future collaborations, and potentially share these experiences to build a decentralized reputation network.

The true complexity here is that trust is not discrete; it’s continuous and highly contextual. You might trust someone in one context but not another, and the trustworthiness thresholds can shift based on external factors like societal risk appetite or environmental changes. AI agents traditionally struggle with continuous concepts, and I currently do not have a perfect solution for addressing this specific threshold effect along many axes.

This brings me back to my project. As I currently envision it, I’ll simulate an agent world featuring dozens of agents divided into hierarchical teams so each agent has a specific function and objectives requiring it to transact across team boundaries. Each agent will have a boilerplate public profile based on its function and a set of private, potentially power-seeking objectives. Two years ago, I built an “asset management” AI world that had all these features except the public registry. This AI world was either powered by the OpenAI API or by local, open source models. I am confident I can simulate much larger, more complex worlds today leveraging improved agent harnesses and batch inference. Compared to my old AI world project, I’d need to add pipelines to (1) recreate the world and randomize agent features for multiple simulation runs, and (2) analyze the resulting agent-to-agent chats to identify relevant behaviors.

I can likely run these simulations locally, but ideally, the environment should feature heterogeneous underlying models (also selected randomly at initialization of the world). I can fund the access to frontier LLMs to a certain extent but I am considering applying for research grants to use frontier models for analyses. Grants would also lend institutional legitimacy to the research. Another important point I want to make about this project is that it doesn’t have to be my project. If you’re interested in helping resurrect and build this; if you think you can add value - whether from a technical perspective or a social science/game theory perspective - please reach out. Nothing would be more valuable at this stage than random ideas and sparring partners.